A/B testing

Make A/B testing effortless with simple strategies, test ideas, templates, and answers to your top questions.

A/B testing toolkit

SUBSCRIBE

Sign up for expert marketing advice—delivered right to your inbox

What to A/B test: Ideas and examples

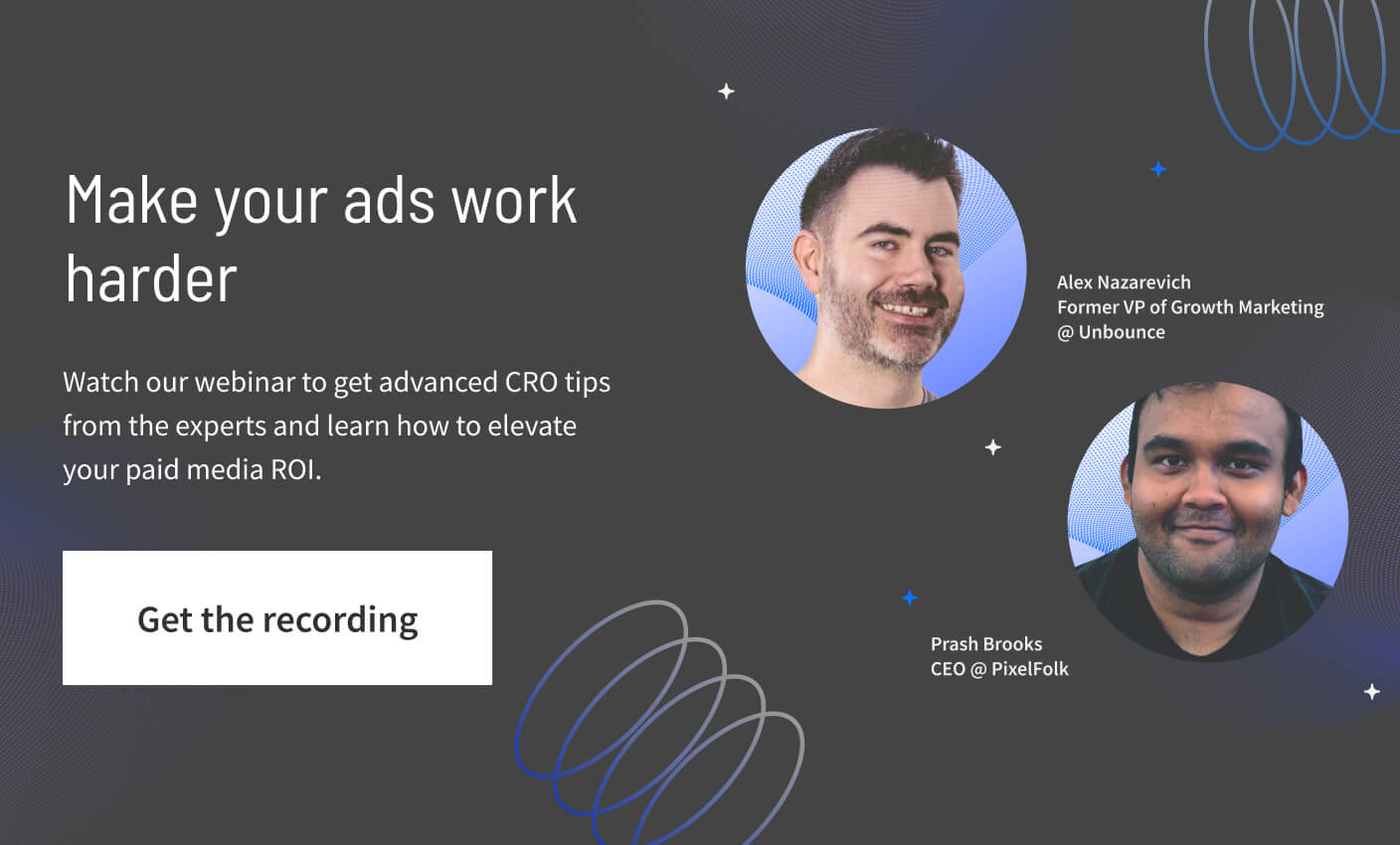

Level up your A/B testing skills

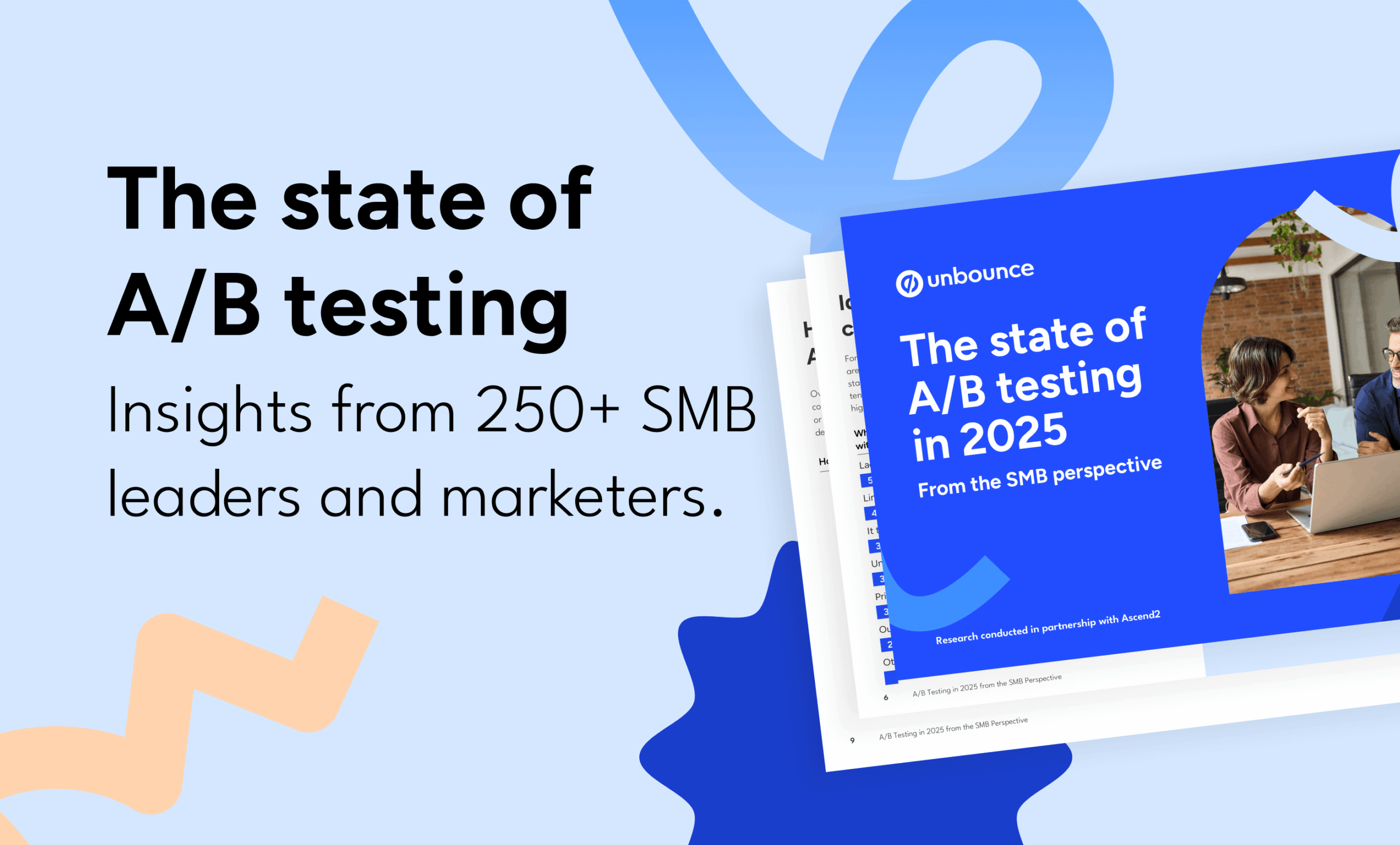

All posts about A/B testing

59 Results

![[Experiment - TOFU] Paid Media Experiment Brief - V1 - June 2025 (1) (1)](https://unbounce.com/photos/Experiment-TOFU-Paid-Media-Experiment-Brief-V1-June-2025-1-1.jpg)