When it comes to conversion rate optimization, we typically begin by optimizing the top of the funnel – social media posting times, calls to action, landing page headlines and images.

But as we are becoming proficient at CRO in these areas, we need to start looking further down the funnel.

Why’s that?

People who have taken that first step and qualified themselves by signing up for your mailing list or for a free trial are incredibly valuable to your business. And a series of well-timed, well-written emails can be an incredibly powerful tool for welcoming new users and converting them into lifelong customers.

A/B testing can help you optimize your welcome messages – but you need to test more than just your subject line.

Here are seven more advanced A/B tests you should be running to optimize your welcome emails.

1. Test timing and frequency

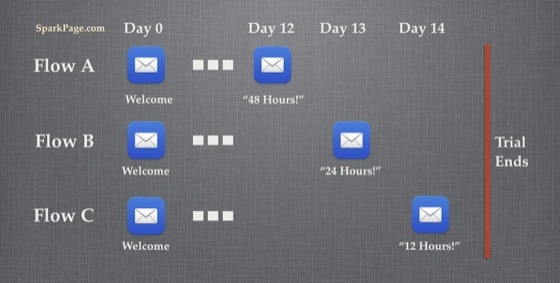

A new user has just signed up for a 14-day free trial. Your service and your emails now have 14 days to make them excited about becoming a paying customer.

So how many emails do you send?

One per day? That’s a bit spammy, isn’t it?

One per week? They’ll probably forget who you are by the time the second email arrives.

Heck, why not send five emails in the first day? Or three in the first three days, then none for a week?

As you start to ask questions like this, you’ll realize that these are just assumptions (or worse, HIPPOs!) around email frequency.

And very often, your assumptions around what is too much or too little will be way off the mark.

This, of course, is where some good old-fashioned A/B testing comes in.

How to test frequency

Suggesting, “Why don’t we send five emails in the first day?” is just throwing spaghetti at the wall and seeing what might stick. Sure, you may find some conversion uplift, but it teaches you very little.

A better approach is to start with a hypothesis like this:

Insight: People who sign up to our free trial are trying to solve an urgent problem. So urgent, in fact, that it’s the number one thing on their to-do list this week. They’ll be evaluating our service and competitors intensely over the next 48 hours and running the first live trial within the next 72 hours.

Hypothesis: Heavily loading our welcome messaging within the first 72 hours will be welcome, timely and relevant for these users and will increase conversion rates.

Insight driven and very testable. Lovely.

This is better than the spaghetti approach because it helps you figure out the best timing and it teaches you something about your users. As an added bonus, the results from your test give you insight that can help develop new hypotheses and further tests and experiments.

In this article, Michael Aagaard talks about using insight from other case studies to generate hypotheses. His examples are for landing pages but the process is just the same.

2. Test urgency

Testing the timing of your messages is a great first test to run, but you’ll also want to start testing the content of these messages. We’re not going to discuss the words you can test in a subject line or on a CTA, because we all know how to do that.

Instead, let’s look at a tactic that many companies use in their emails to increase conversions: adding a sense of urgency.

Referencing time limits in your welcome emails can create a sense of hurry in your users and encouraging them to act now.

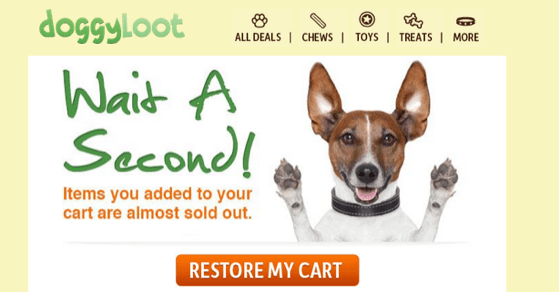

How Doggyloot uses urgency

Take a look at what happened when Doggyloot tested urgency in their shopping cart abandonment emails. In the screenshot below, you can see that they tell you that the “Items you added to your cart are almost sold out.”

When they added a sense of urgency and a clear call to action, Doggyloot’s abandonment emails became responsible for an additional day’s worth of revenue each month.

Any time I want to optimize a set of automated emails, I often start by experimenting with urgency. Most often, it produces the biggest early uplifts.

How to test urgency

If you’re not sure where to start, you can start testing urgency in your emails by sending reminder emails at different time intervals.

A few things you should know before you get started:

- Always include a control group that doesn’t receive the email you’re testing. This control will act as a baseline and help you be certain that any reminder email – regardless of the timing – is better than no email at all. It also lets you isolate and measure the exact impact of each email on your conversion rate.

- The number of variations in your test should depend on the number of new signups you get. If you’re not getting many, start with two variations and use a statistical significance calculator. I usually let tests run for two or three weeks, and I pick the number of variations that will give me (roughly) 100 conversions each.

3. Test personalization

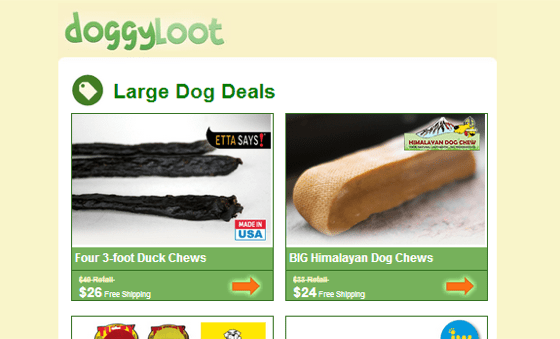

Doggyloot has seen a lot of success from their email marketing, in part because of their decision to run personalization and segmentation tests based on one very important point of doggy data: the size of the owner’s dog (small, medium or large).

They did this because they figured that Chihuahua owners wouldn’t be buying St. Bernard chew toys which were probably bigger than the dogs themselves!

Segmenting emails based on this data produced results that were pretty astounding. They found that emails that were targeted to large dog owners had click-through rates 410% higher than the average.

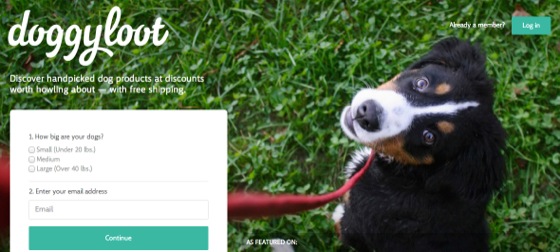

This single piece of data was so powerful that it’s now the only question they ask of every new user on their homepage (in addition to email):

Knowing and using one simple data point about your recipients can drastically improve your conversion rates from emails.

How to test personalization

What other personal details can you capture to experiment with in your welcome emails?

- You could start with details that are explicitly captured – like name, date of birth and company name.

- You could also experiment with information that is implicitly captured – like location, device type or level of product usage.

- If you want to try something a little more advanced, try personalizing based on the ad campaign that attracted them, the keyword they searched for or the landing page they signed up on.

All of these data points are fertile grounds for testing. The important thing is to get started!

4. Test asking for feedback

In early 2012, I read this post by Derek Halpern, which lead to one of the most valuable emails I ever wrote.

In the post, Derek recommends engaging new users (or blog subscribers) with an automatic email containing one simple question:

“What are you struggling with?”

Since his post was first published, I’ve used this technique again and again and I’ve found it to be extremely valuable.

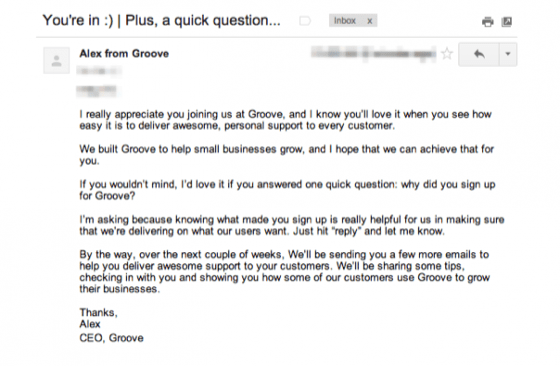

How Groove asks for feedback

Customers who have already converted have extremely valuable insight to offer. With my SaaS company, asking questions such as, “What’s the biggest part of your marketing day job that you’re struggling with right now?” played a big part in shaping our product roadmap.

Groove, a customer support SaaS company, started including a similar question in their confirmation email to all new trial users:

This automated email has proven quite useful to Groove:

“This email gets a 41% response rate and has given us more business insight than any email we’ve ever sent.”

Here are some of the responses they got:

They used these responses to refine their marketing messages, which ultimately doubled the conversion rate on their homepage.

They also have an interesting note about the timing of this feedback email, which they have tested:

“Interestingly, we found that the answer to this question varies widely between customers who answer it immediately after signup and those who have been using Groove for a week or longer. After a few days, the responses begin to skew more toward specific features within the app.

For that reason, we felt it was important to ask the question right away, so that the decision was still fresh in the user’s mind.”

Asking for feedback from your prospects is a great start, but you will learn more about your prospects once you start testing when and what you are asking.

5. Test delivering unexpected value

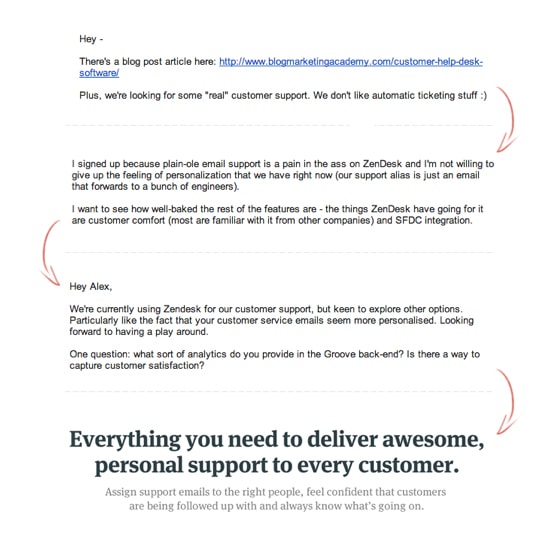

In a past life at a marketing consultancy, I had many clients who were musicians and record labels. Some of the musicians had large mailing lists and sent monthly newsletters.

These typically performed well but there was one problem: Whether you’d been a fan for 10 days or 10 years, you’d get the same monthly email. And because most people on the list weren’t new, the newsletter content tended to be geared to long-term fans – which was off-putting for a new subscriber.

When we started acknowledging new subscribers by putting basic welcome campaigns in place, the results were dramatic.

Instead of getting a stream of monthly newsletters, every new fan would get a series of emails introducing them to the artist and ultimately building an emotional connection. After a few weeks, new subscribers were presented the opportunity to support the artist by buying the latest album or merch.

With one Irish artist, we started testing different motivating factors we could implement before “the ask.” If we could send them one email 24-48 hours before asking them to purchase, what type of email would be most likely to get them to subsequently convert?

Something with strong social proof, showing the thousands of others enjoying the music? Or a reminder about how people who purchase are supporting an artist?

It turns out that the single biggest boost (which doubled the subsequent purchase rate) was an unexpected gift.

About four weeks after joining the mailing list, a fan would get an “out of the blue” email with three free, exclusive-to-the-mailing-list acoustic songs and a personal note from the musician.

This was a true gift – it wasn’t contingent on any actions, which I think is what made it so successful.

It was just nice.

And people who got this gift were twice as likely to buy the album or a t-shirt when they got “the ask” two days later.

This is applicable to any business.

I’ve seen businesses send free ebooks or white papers or even just “free” access to an old webinar.

There’s lots of good psychology to suggest why this works (gifting begets reciprocation), but it’s easy to see how unexpected delight and value would make a potential customer happier to buy from you.

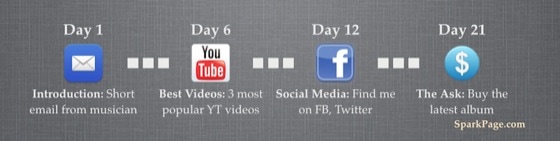

6. Test email design

By now, we know the importance of the email subject line and content. But what about the design of the email?

Should your welcome emails look like flashy ezine newsletters? Or like slightly toned down emails with brand colors, a logo and a few product images? What about a plain text email, with no branding or design at all?

Testing email design

The Obama 2012 campaign team found that the top-earning emails were consistently personal-style emails in plain text.

Here’s what Amelia Showalter, Obama’s director of analytics had to say about it:

“I think it gave the impression that there were real people writing these emails, that it wasn’t focus-grouped to death. It was something more off the cuff.”

On the other hand, Wishpond recently blogged about tests they ran which found the opposite.

They had read a study which said that, “67% of consumers consider clear, detailed images to carry more weight than product information or customer ratings,” so they decided to test that in their emails.

Sure enough, they found that emails with images had a 60% higher click through rate than text-only emails.

Now, I didn’t include both counter examples to confuse you!

I included them because they illustrate that you can’t trust your gut, industry standards or what competitors are doing. You just have to test.

7. Test winning users back

What about when your customer’s free trial is over? What should you do if you’ve brought them through a well-timed, personalized and valuable welcome sequence and they still haven’t converted into a customer?

Just because they haven’t acted yet doesn’t mean they’re not interested in what you have to offer. It doesn’t hurt to try to win them back.

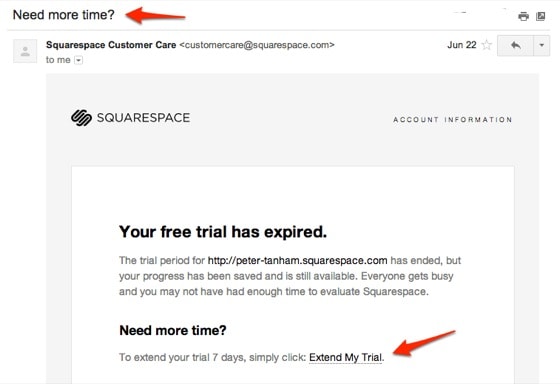

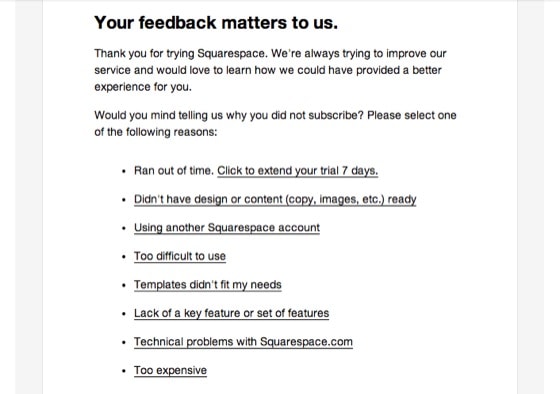

How Squarespace wins users back

Let’s take a look at two emails Squarespace sends to users who haven’t converted from trial to paying.

This first email, sent two days after the free trial ends, offers seven more days and a second chance for the customer to re-engage – a good combination of urgency and win back.

The second email, which comes a few days later, has the subject, “What could we have done better?” Even though it’s an email asking for feedback, it still gives the user the option to extend their trial and get back in.

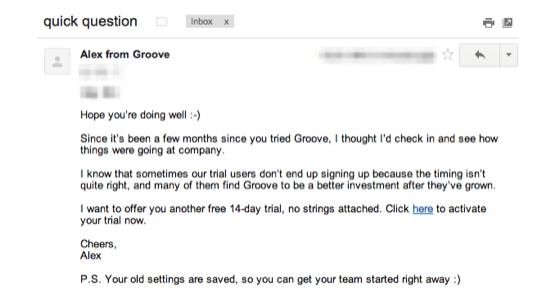

How Groove wins users back

Groove also experimented with win back emails as part of their onboarding flow. If a user hadn’t yet converted, they sent emails 7, 21 and 90 days after the end of their free trial.

90 days may seem far too long (will users even remember Groove?) – but when they tested it, they found that email gets a steady 2% conversion rate.

Not a massive number, but 2% is better than the 0% you’d get if you had let them go forever!

Have hypothesis, will test

The key here isn’t to copy the tactics used by the winning tests verbatim, but to find inspiration for running powerful tests to run in your own welcome messaging.

To get started you need some customer insight – either from your existing support emails, your ongoing customer development or by simply asking subscribers what they’re struggling with. This insight will help you create hypotheses about where you can add messages that motivate, that remove barriers, that build relationships and that create urgency.

If you couple the landing page you’ve worked so hard on with an equally awesome welcome email series, you’ll find that more subscribers will happily convert. But the only way to make your welcome emails awesome is to start testing today. You can use Mailchimp, AWeber or SparkPage to build your first welcome sequence – they all have free trials.

So get to it! Which of these A/B tests will you start with?

Listen to Peter on the Call to Action podcast:

![]()