A while back, WhichTestWon released the Conversion Rate Optimization Pop Quiz to see where marketers struggled most with CRO.

More than 4,500 people took the 25-question quiz.

Though the quiz was meant to be a fun exercise, it ended up revealing some troubling insights. The data from respondents showed that as CRO continues to grow as a discipline, many marketers have yet to learn its best practices.

Without further ado, here are the top five questions (in descending order) that marketers got wrong.

Note: This post contains spoilers for the pop quiz. If you want to accurately test your CRO knowledge, please take the test before reading on. You’ve been warned!

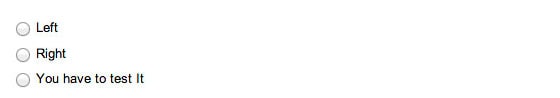

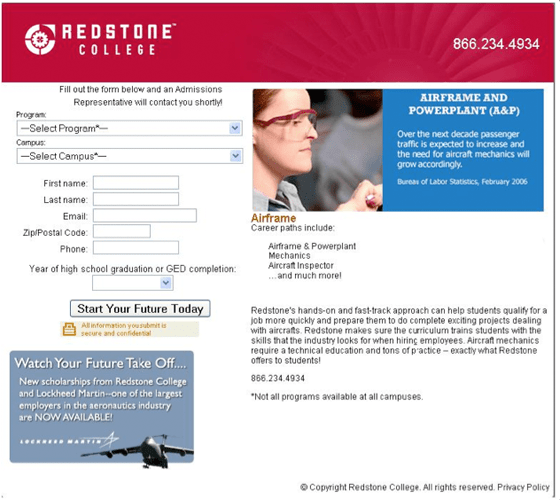

5. Which side of the page should a form be on to get the highest conversions?

44% of the people who took this quiz got this question wrong.

I admit that this could be considered a trick question since the people who took the quiz were familiar with the standard WhichTestWon dichotomy of choosing between Version A or Version B.

For this question, there was a third option: “You have to test it.”

I won’t ruin the answer for you (especially if you plan to take the quiz), but based on our general mission, the answer should be clear. :-)

4. What does a high confidence rate help prevent?

46% of people got this one wrong, and that’s a problem.

In fact, I think this was the question that disappointed me the most.

Understanding confidence level is integral to understanding your test’s validity. If you don’t understand this concept, you may be doing your organization some major harm.

The correct answer

Your test’s confidence level indicates the chance of committing a Type I error.

A Type I error, also known as a “False Positive,” indicates whether the change between variations was due to alterations to the page or due to other noise.

The confidence level does not indicate the percent of effectiveness of the test or the element’s percent contribution to the total lift. Yes, I’ve heard it explained this way by so-called “experts” more than I’d care to admit.

Currently, the industry standard is a 95% confidence level, which leaves a 5% chance that the change in conversion wasn’t due to the tested element.

BONUS TIP:

Two other confidence level related items worth noting:

- You can never get a 100% confidence level. Stop reporting that way. It’s wrong.

- Even at a 95% confidence level, errors can occur. Essentially, 1 in every 20 tests will commit a false positive. If you have the time and resources, verify results before making changes your landing page.

Re-testing is a great way to verify results, but is only necessary (in my opinion) for large-scale changes or when you question your results. If you don’t have time to re-test, keep an eye on your numbers and make sure you have a large sample size as well as ample conversions (the rule of thumb is >100 per variation).

3. If you change a feature on your homepage and see a drop in returning visitor conversions, what may your page be suffering from?

47% of people got this wrong. Knowing the effects that can taint your data is extremely important, so pay close attention to this one!

The correct answer

Whenever you change a long-standing design element or feature on your site, be prepared for a drop-off caused by the “Primacy Effect.”

The Primacy Effect occurs when you make major layout changes that require experienced visitors to “re-learn” how to use your site. This effect can cause your variation to perform poorly in the early stages of testing (even if it turns out to be the winner in the long run).

BONUS TIP:

The Primacy Effect is one of many reasons you should schedule your test to run for at least one buying cycle. Buying cycles vary between industries. For ecommerce stores, tests should run for a full week at minimum. For industries with longer sales cycles, your tests should run for a longer period of time.

Remember, if you call a test too early, you may be making the wrong decision. This will negatively impact your long-term goals.

2. What’s an A/A test?

52% of people got this wrong. This didn’t throw me off as much as some of the other stats, because A/A testing is more of a luxury for organizations with a refined testing program.

The correct answer

An A/A test is when you run the exact same page as two separate test cells.

… Why would you want to test the exact same page against itself? Because A/A testing a great tool for diagnosing your testing technology. If your numbers aren’t matching up or something just doesn’t seem right, it’s time to make sure your tech is up to snuff.

When you run an A/A test, you are rooting for no statistically significant change between the two variations – though you should always remember that statistically, you will see 1 in 20 A/A tests show statistically significant results when measured at a 95% confidence level.

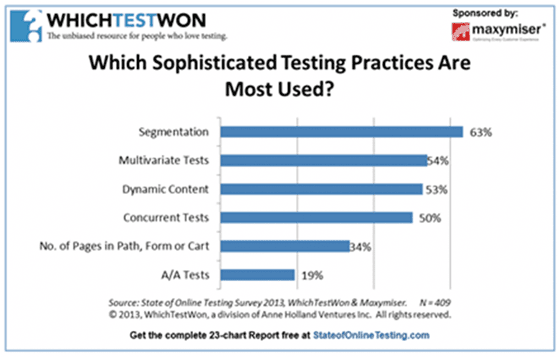

Our 2013 State of Online Testing report showed that in addition to not knowing what an A/A test actually is, fewer people are A/A testing. This is problematic because people are too reliant on tech to do all of their thinking. The best tech can have bugs, and A/A testing is a great place to start if you are skeptical of your data.

And now for the #1 question that saw the greatest percentage of incorrect answers… Drum roll, please!

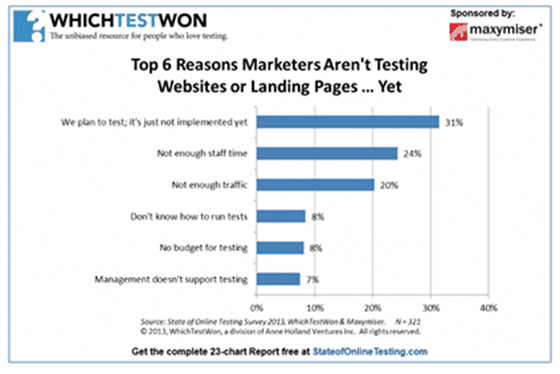

1. What do most marketers say is the biggest hurdle for creating a testing program?

53% of people got this wrong. So what do marketers report as the biggest hurdle to establishing a testing program?

The correct answer

Our State of Online Testing report showed that the two most common reasons for not testing were, “We plan to test it; it’s just not implemented yet” and “Not enough staff time.”

Taken together, these stats suggest that marketers are not prioritizing their testing programs – accordingly, more marketers are have difficulty finding the time for staff to create, implement and report on tests.

Many marketeres are fortunate enough to have solid testing procedures, resources and management buy-in. That said, we shouldn’t assume that everyone’s testing program is advanced – for many, CRO is still an afterthought.

Whether it’s because they have other priorities or not enough man power, our report showed that many marketers who understand the importance of CRO aren’t able to test because they won’t make the time.

Testing is not a “one and done” solution. It requires rigorous analysis and creativity spread across several different departments including marketing, design, IT, PR and others.

BONUS TIP:

Getting management to sign off on new staff or change around staff responsibilities is a catch-22 – management wants to see results before you have the resources to actually implement a test that inspires management buy-in.The best way to get around this hump is to pick a “low-hanging fruit” project, or pages with potential for a major lift. Landing page tests are one of the best examples because testing them can show concrete and easily reported conversion lifts.

Testing technology is inexpensive and generally very easy to use. Whether you’re using qualitative tools such as eye-tracking, user testing and user surveys or quantitative tools (such as analytics platforms), start testing today so you can to get management buy-in and expand your testing program.

How do you measure up?

My personal mission is to edify marketers to increase our collective knowledge base and continue to grow conversion rate optimization as a marketing discipline

And though the results of our pop quiz showed that much progress has yet to be made, many smart marketers have already made CRO an essential component of their marketing efforts.

So how do you measure up in comparison to your peers? Though there were a few quiz spoilers, I suggest giving it a try for yourself to see where you rank. Don’t forget to share your results in the comments!

Good luck and as always, happy testing!

![]()