A few weeks ago, I did a keynote at a conference in Copenhagen where I talked about the 10 biggest A/B testing mistakes I’ve made in my career.

Preparing for that presentation was a fascinating process as it forced me to scrutinize all the mistakes I’ve made and narrow them down to a top 10 list. I was surprised that one of my biggest mistakes was that I used to do too much testing.

Sound strange coming from a guy who has been known as “the split test junkie”? Well, read the rest of the article and it will make a lot more sense…

Let’s start with a real-world success story

I recently ran an A/B test for a client where we experimented with a radically different version of one of their most important landing pages. The treatment won the test and went on to earn the client an extra five figures within the first two weeks of replacing the original landing page.

How did we get these results? It wasn’t by running a large number of experiments or testing a bunch of different ideas. We got the results by putting a hell of a lot of time into preparing the landing page treatment.

In fact, of the time I spent working on this project, only a fraction went into actual testing. We spent the majority of time collecting data, getting insight and building optimization hypotheses.

I did customer interviews, conducted online surveys, dug into analytics, analyzed funnels, ran heat maps, reviewed session recordings and interviewed the guys in customer service and sales.

Then I took all that data and turned it into actionable insights that I could use to build a solid, informed hypothesis on how to optimize the landing page so more potential customers would buy.

Only then – with all that insight in place – did we move on to working on the copy, content and design for the landing page itself.

Give me six hours to optimize a landing page and I’ll spend the first four getting insight

Abraham Lincoln once said, “Give me six hours to chop down a tree, and I’ll spend the first four sharpening the ax.”

Jumping headfirst into a series of landing page tests with no data and insight is like chopping blindly away at a tree for hours will a dull ax, hoping that the tree will eventually give way to the blade and fall over.

In landing page optimization, collecting data and getting insight is like sharpening the ax. We’re trying to increase our chances of being able to take down the tree in the first attempt – maybe even in the first swing.

Your A/B test is only as good as your hypothesis

For a long time, I thought that landing page optimization was all about conducting as many tests as possible – boy, was I wrong about that one! After years of trial and error, it finally dawned on me that that the most successful tests were the ones based on insight and solid hypotheses – not impulse, personal preference or pure guesswork.

In my experience, most A/B tests fail because of the underlying test hypothesis – either the hypothesis was fundamentally flawed, or there was no hypothesis to begin with.

In landing page optimization, the test hypothesis is the basic assumption that you base your optimized variant on. It encapsulates what you want to change on the landing page and what impact you expect to see from making that change. Moreover, it forces you to scrutinize your test ideas and helps you keep your eyes on the goal.

If we stick to the Lincoln analogy, formulating a test hypothesis is like doing a test to check whether your blade really is sharp enough to dig into the trunk and effectively cut down the tree.

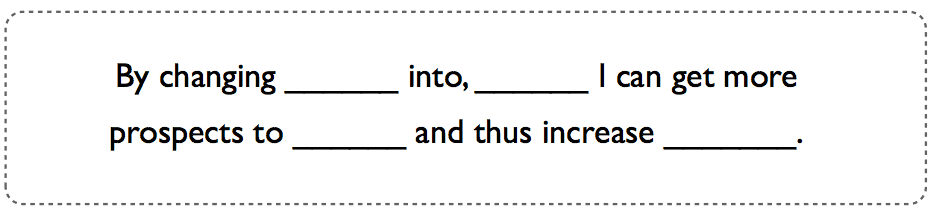

You can do an on-the-fly test of your optimization idea by filling in the blanks in this template:

If the expected outcome seems way too good to be true (or simply stupid), it’s a clear sign that that your test hypothesis is too weak to have an impact in the minds of your potential customers.

Working with test hypotheses provides you with a much more solid optimization framework than simply running with guesses and ideas that come about on a whim.

But remember that a solid test hypothesis is an informed solution to a real problem – not an arbitrary guess. The more research and data you have to base your hypothesis on, the better it will be.

Check out this article for a more in-depth guide on how to formulate a solid test hypothesis.

Before running A/B tests, sharpen your ax with this technique

Here’s a technique I’ve developed that will force you to spend more time getting all the basic insight in place before you start testing variations of your landing page.

It’s very simple – basically it’s all about getting the right answers by asking the right questions in the right order: Who? Where? Why? What? How?

Answering each question will vary in difficulty depending on how much insight you have. Some questions will be easy for you to answer, while others will require you to dig deep for more data.

And that’s really the beauty of this technique – it helps you identify pockets of ignorance where you need more insight to fill in the gaps.

1. Who?

Everything starts (and ends) with your potential customers. So before you do anything else, get a clear idea of who is visiting your landing page.

Question to ask:

- Who is visiting my landing page?

2. Where?

Context will impact the decisions and actions of your potential customers, so it’s important that you understand the circumstances that brought them to your landing page. Did they see a banner ad? Did they read your newsletter? Were they searching for answers on Google? Did they click on one of your PPC ads?

Question to ask:

- Where were they before they visited my landing page?

3. Why?

Understanding the motivations and barriers of your target audience is vital. It’s important that you spend time gaining an understanding of what makes them tick.

Questions to ask:

- Why are they visiting my landing page?

- Why would they want what I’m offering?

- Why would they say yes?

- Why would they say no?

4. What?

Now that you know who you are communicating with, why they visited your landing page and what their motivations/barriers are, it’s time to get more specific about the content on your landing page.

Questions to ask:

- What do my prospects need to know in order to say yes?

- What should I focus on in order to convey the value of my offer?

- What should I focus on in order to overcome likely objections?

- What’s going to happen after they say yes?

- What is this page missing and what should I add?

- What should I remove from this page?

5. How?

Now – and only now – that you’ve gone through the initial four questions can you move on to the big “how” question:

- How should I optimize this landing page in order to get more prospects to say yes?

If you have a tendency to base your landing page tests on whims and random ideas, try asking yourself this series of questions before you run your next test. I bet you’ll be pleasantly surprised by the effectiveness of your new LPO process.

I’ve used this technique with several clients and though it usually takes several hours to get to the final “how” question, I’m consistently surprised by how much conversation, scrutiny and analysis it has facilitated.

And the moral of the story is…

Running a series of random A/B tests on your landing page with no insight and no underlying hypothesis is like charging blindfolded through the woods, swinging a dull ax and hoping to hit a tree.

It may sound like a ton of fun, but if your goal is to chop down a lot of trees (or get a lot of conversions), it isn’t a very effective strategy by any means.

Preparation is a key ingredient for success, and the more insight you have before you start formulating your test hypotheses and designing your landing page treatments, the more successful you’ll be in the long run. Take it from me – a recovering split test junkie.

Do you create an educated hypothesis before running an A/B test? Let me know in the comments!