“Getting a negative test result is the worst thing that can happen when you perform an A/B test – right?”

While many marketers probably would subscribe to the logic behind this statement, experience from almost 400 split tests tells me otherwise. A negative test result will often give you as much insight and learning as a test that generated a major lift.

Sounds weird? Read the rest of the post, look at some real world examples and find out what the deal is.

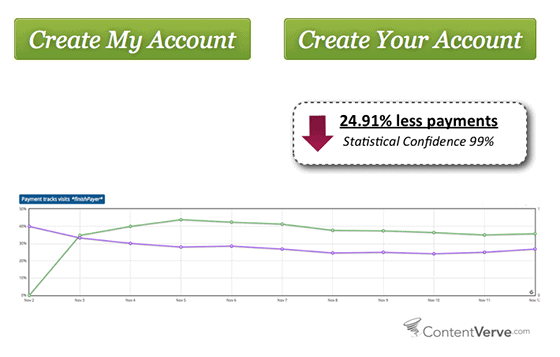

How changing one word in the call-to-action reduced conversions on a payment page by 26.55%

Here’s an example from a series of tests I conducted on a payment page for one of my clients. This page is the last step in the conversion funnel and every click means money in the bank.

Experience from a vast amount of A/B tests told me that the call-to-action copy itself would have major impact on whether prospects would end up clicking the button, and I had a number of hypotheses that I wanted to test.

One of them was the hypothesis that the possessive determiner “Your” works better than “My” when used in a button. Based on the fact that websites generally speak to visitors in second person singular form, it would seem strange to suddenly shift to first person perspective and use the word “My” in the final call-to-action.

I was certain that my hypothesis was dead on and I basically just performed the test to show the client that I was right. Boy was I in for a surprise!

When I tested live on the website, it turned out that the treatment with “Your” performed significantly worse than the control copy that made use of “My” – 24.95% worse to be more precise.

Needless to say I was humbled and taken aback by the result of the test – my hypothesis was totally off, and what I thought would increase conversions had the exact opposite effect.

This made me wonder whether what I saw here was a fluke or if I had actually stumbled upon something that could be used positively. In other words; whether I would be able to generate positive lifts by changing the possessive determiner to My in cases where the CTA copy made use of Your.

I ended up performing this test on a number of websites and landing pages and consistently saw lifts by using My rather than Your in the button copy.

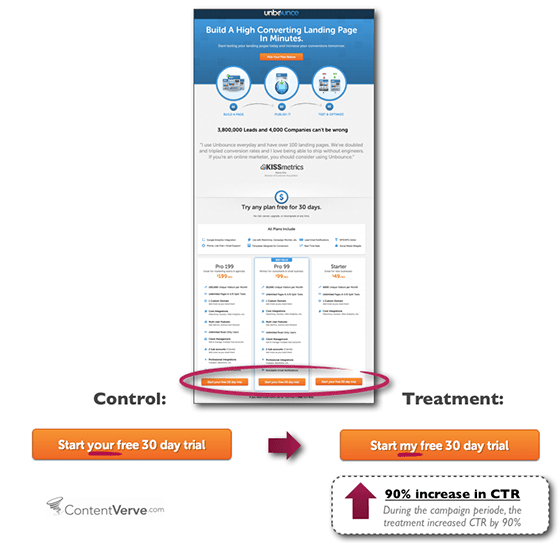

The most dramatic lift was actually in connection with an A/B tests that Oli and I performed on a PPC landing page here on unbounce.com. In this case, we saw a 90% increase in click through rate when we tested Get my free 30-day trial against Get your 30-day trial.

How adding a privacy policy reduced sign-ups by 18.70%

Here’s another great example of a test where I was very confident that my treatment would kick ass. In fact, I didn’t even perform the test to see whether the treatment would generate a lift, I performed the test to see how much of a lift it would generate.

The client here is Bettingexpert.com an international betting community. I was hired to optimize the home page with the goal of getting more potential users to sign up for a membership.

I decided to focus on the most critical part of the page – the sign-up form itself. One of the things that struck me early on was the fact that there the form had no privacy policy. Taking the nature of the website into consideration, it seemed quite safe to assume that adding a privacy policy would mitigate anxiety and make more people sign up.

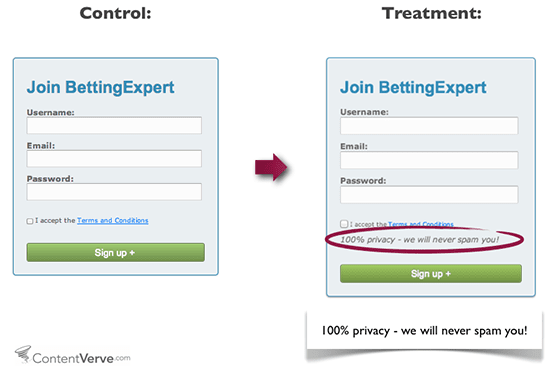

I decided to test a slight variation of a privacy policy that I had come across on a very popular online marketing blog. The policy said, “100% privacy – We will never spam you”.

As mentioned, I felt very confident while setting up the test, and I was excited about the prospect of seeing how much better the treatment would perform in real life. Boy did I have another thing coming!

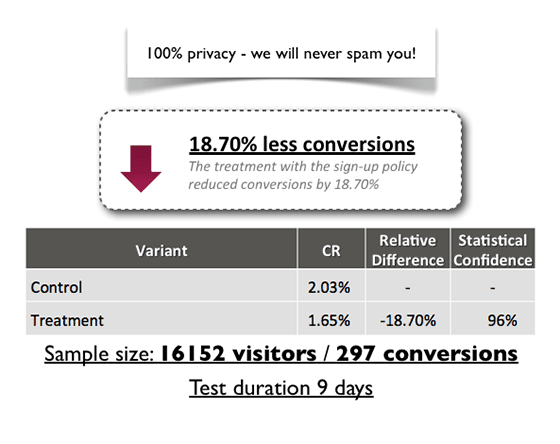

The treatment with the privacy policy reduced conversions by 18.70%. Again I was humbled and taken aback by the test data. The result was completely counter-intuitive but it taught me an important lesson: simply adding a privacy policy doesn’t guarantee more sing-ups, in fact it can seriously reduce your conversion rate.

This negative test result really got my mind going, and I decided to run a number of follow-up experiments to learn more about what made this particular privacy policy have a negative impact in the mind of the prospects.

My tests indicated that the word spam had an undesirable effect – even when used to assure visitors that they would not receive any spam. My hypothesis is that by placing the word spam in close proximity of the form, you actually plant an idea in the minds of the prospects; “Oh wait, could they actually end up spamming me?”

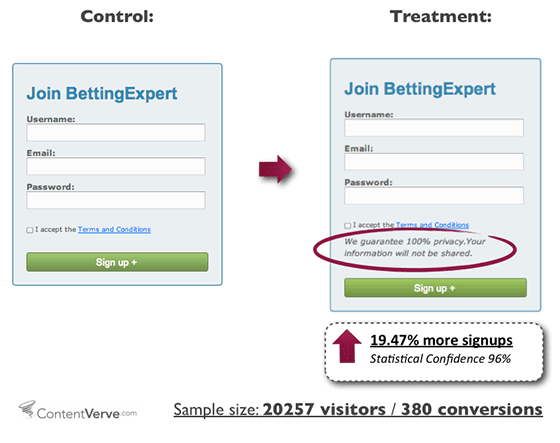

After 3 follow-up experiments, I ended up with a winner that worked well and increased sign-ups by 19.47%. The winning privacy policy said, “We guarantee 100% privacy. Your information will not be shared.”

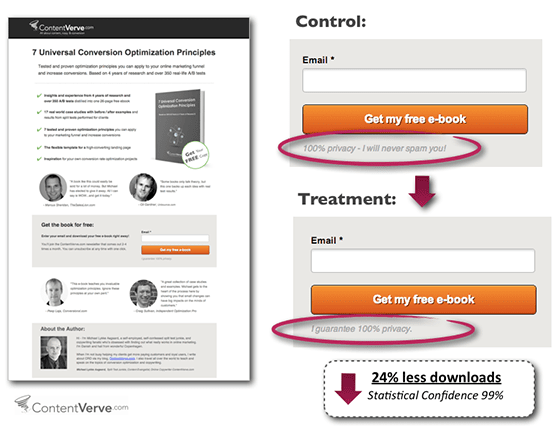

I’ve performed privacy policy tests on other landing pages, and the results have been quite similar. Here’s en example from the landing page for my free CRO e-book 7 Universal Conversion Optimization Principles where I saw a 24% drop in downloads when ran the original “I guarantee 100% privacy.” Against “100% privacy – I will never spam you!”

And the moral of the article is…

“The goal of a test is not to get a lift, but rather to get a learning…” Dr. Flint McGlaughlin, MECLABS

One might be inclined to view some of the case studies in this article as bad tests. But in fact they weren’t bad tests at all because they provided important insights that eventually lead to serious conversion lifts on a number of different landing pages.

Landing page optimization is an ongoing process, and as long as your test results lead to new insights and learning, it essentially isn’t that important whether the initial test results are positive or negative.

Of course hitting a home run in the first swing is easier on the ego. But when you approach optimization as a process – not a one-off opportunity to swing for the fences – you’ll see that stopping at a few bases along the way is often what it takes to win the game.