The dream scenario for most online businesses is to get the highest possible lift with the smallest possible investment in optimization.

The obvious way of making this dream come true is to implement small, simple changes that have a significant affect on conversions. But I know from experience that identifying such optimization opportunities can be really, really difficult.

In this article we’ll take a closer look at when, how and why small changes can have a major impact on increasing conversion rates.

Let’s start out with an example from the real world…

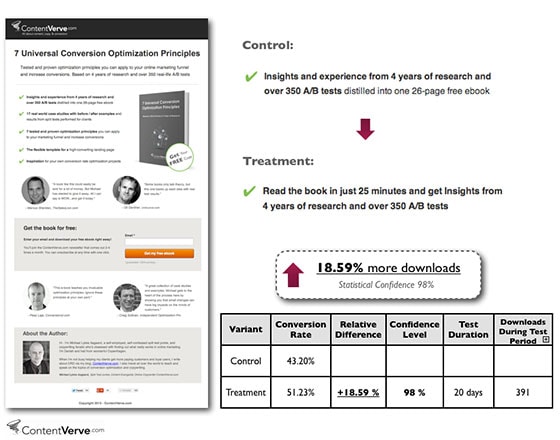

How a simple Landing Page copy tweak generated an 18.59% conversion lift

This is a test I recently performed on the landing page for my free ebook 7 Universal Conversion Optimization Principles.. I was able to generate an 18.59% increase in downloads by simply tweaking one bullet point.

At first glance you might be thinking, “Come on, man – changing a few words in one bullet point can’t have that much of an impact!”

But if we dig a little bit deeper and look at the underlying test hypothesis, you’ll see that it really does make a lot of sense.

First off, the first bullet point holds a prominent position on the page, and an analysis with Eyequant (a clever new tool that predicts how visitors will view your landing pages) suggested that the first few words of this bullet point would attract initial attention from visitors.

Secondly, I know from my own behavior that time is a barrier that impacts my decision every time I’m about to download a free ebook; I simply don’t have time to read all the stuff on my iPad.

I figured that I couldn’t be the only person with this problem and that I might be able to increase conversions by emphasizing the fact that my ebook only takes 25 minutes to read.

This sequence of thoughts resulted in the following hypothesis:

By tweaking the copy in the first bullet point to directly address the ‘time barrier’, I can motivate more visitors to download the ebook and increase the conversion rate of the landing page.

This test hypothesis led to tweaking the bullet copy, which in turn led to a significant increase in downloads.

Why and when small changes have a major impact on conversion

In order for any change to have an impact on conversion, it must first have an impact in the mind of the prospect. As the above example demonstrates, small changes can have a major impact when they:

- Address a pain point or mental barrier that stands in the way of the prospect making the “right” decision on the landing page

- Are made strategically to a prominent element on the landing page

- Are made strategically to a mission critical element on the landing page

Let’s look at some more real-world examples:

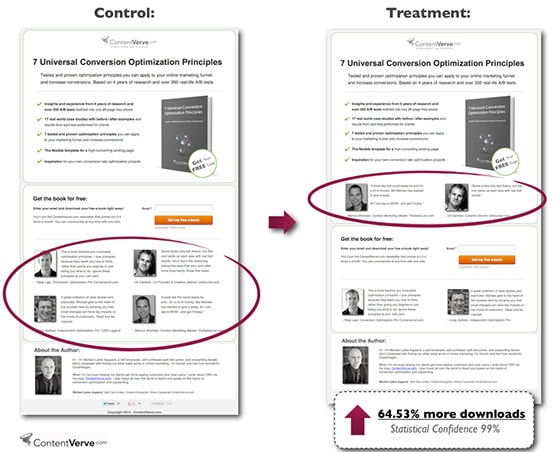

Making a big impact by addressing a mental barrier

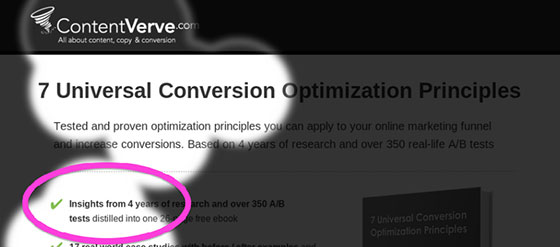

When it comes to free ebooks, there’s another mental barrier that I recognized from my own behavior: Is the book worth reading?

I’ve read my fair share of free ebooks that totally under-delivered on their value proposition. To address this question on my ebook landing page, I’ve added social proof in the form of expert testimonials from industry thought leaders who’ve read the book.

On the control landing page, I placed the four testimonials at the bottom of the page just below the CTA. But I hypothesized that the testimonials would have more impact if two of them were placed higher on the page so they’d be easy to spot as soon as a visitor lands on the page.

The test data confirmed my hypothesis and the treatment increased the number of downloads by 64.53% (!)

Again this may seem like a crazy result, but with a little more insight it makes perfect sense. The first testimonial is by Marcus Sheridan of The Sales Lion and it says, “A book like this could easily be sold for a lot of money. But Michael has elected to give it away. All I can say is WOW…. and get it today.”

The second testimonial is from Unbounce’s very own Oli Gardner and it says, “Some books only talk theory, but this one backs up each idea with real test results.”

This means that I have two testimonials from credible sources that directly address the question (mental barrier) of whether the ebook is worth the read. It only makes sense to showcase them prominently on the page.

Making a big impact by tweaking a prominent element

Here’s an example of a painfully simple change I made on a YouTube Mp3 Converter landing page. In this case, simply changing the font color of the headline from orange to black was enough to increase downloads by 6.64%.

A simple squint test (squint your eyes, stand back and see which elements stand out) revealed that the orange font color made the headline fall in line with the rest of the landing page design. I hypothesized that changing the font color to black would make the headline copy stand out and increase readability.

I put my hypothesis to the test and was pleased to see that it held water: the change in font color increased readability and made it easier for prospects to understand the value of the offer right away.

The headline is one of the most prominent elements on your landing page. It’s also one of the few copy elements that you can be 99% sure your prospects will read and therefore has a significant impact on the decision-making process of your potential customer.

More often than not, small headline tweaks will have a measurable impact on conversion.

Making a big impact by tweaking a mission-critical element on your landing page

Mission-critical elements are parts of your landing page that your prospects have to interact with in order to get to the next step in the conversion funnel. Call-to-action buttons are a great example.

While CTA buttons may seem like an insignificant design element, they play a decisive part in the conversion sequence and have a direct impact on the decisions and actions of your prospects – regardless of whether you’re asking them to download a PDF, fill out a form, buy a product, or even just click through to another page.

This means that your CTA buttons represent the tipping point between bounce and conversion. They tie every step in the conversion sequence together and make it possible to move from one “micro yes” to the next, all the way to the final “macro yes”.

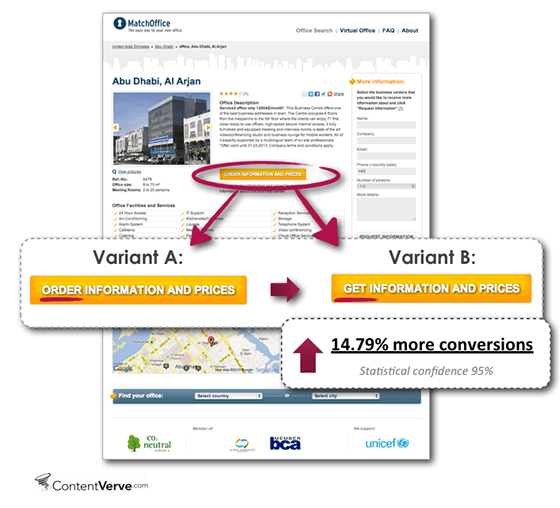

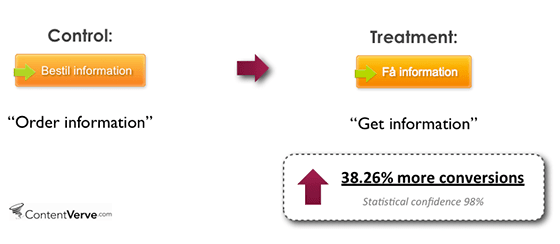

Here’s an example from a test I conducted on MatchOffice.com – an international commercial real estate portal through which businesses can find offices for rent.

Once a prospect finds a relevant office, they have to click through the main CTA in order to get more information on the office via e-mail. This means that clicking the CTA is the main conversion goal, and every extra click potentially means money in the bank.

By changing the button copy from “Order Information and Prices” to “Get information and Prices” we increased conversions by 14.79%.

In case you think this test was a fluke, here’s an example from a Danish sister website where exactly the same exercise resulted in a conversion lift of 38.26%!

It’s a subtle change, but it completely changes the perceived value of clicking the button: “Order” emphasizes what I have to do when I click the button, whereas “Get” emphasizes what I’ll gain by clicking the button.

The more value to the user you can convey via your CTA copy, the more clicks the button will get.

I’ve conducted a vast amount of tests with CTA buttons and it’s quite astounding how much you can move the needle just by optimizing your buttons.

In my experience, optimizing CTAs represents the ultimate low-hanging fruit in LPO.

Sign-up forms are another good example of mission-critical elements. You find them on tons of landing pages and they often represent the main conversion goal.

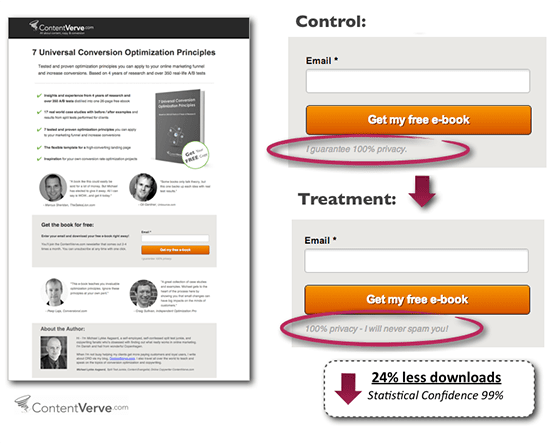

Here’s one more example from my ebook landing page where a simple variation of the privacy policy generated a 24% drop in downloads.

I’ve conduced similar experiments on other websites and my research suggests that the word “spam”, when used in the privacy policy, has a demotivating effect on prospects.

There are many plausible explanations, but it seems that using the “S word” – even if the intention is to guarantee against it – plants the idea in the minds of the prospects and makes them more concerned about signing up.

And the moral of the article is…

“It is not the magnitude of change on the “page” that impacts conversion; it is the magnitude of change in the “mind” of the prospect.”

–Dr. Flint McGlaughlin

In other words, landing page optimization isn’t actually about optimizing landing pages, it’s about optimizing decisions. And increasing conversions isn’t about making radical changes – it’s about making the right changes.