Customer reviews may scare some marketers because they give consumers power, but they are a key component of success on the web.

Thirty percent of users turn to Amazon to research products before buying, in large part because of the customer reviews. Only 13 percent of consumers turn to Google.

Figleaves.com was able to boost conversions by 35 percent by adding customer reviews. eSpares.co.uk increased conversions by 14.2 percent. Walker Sands boosted a client’s conversion rate from 9.5 to 10.5 percent. Need I go on?

Nothing breeds trust quite the way an unfiltered customer review stream does, but that doesn’t mean you should just add a template review form to your site. We need to understand how and why these reviews are influencing sales and we need to organize them in a way that will optimize conversions.

To do that, we need data-driven strategies. The good news? I’ve got some for you.

How customer reviews impact conversions

To investigate how reviews influence conversions, a team of research professors turned to the obvious place: Amazon. The results of the experiment were published in the Journal of Marketing.

The team pulled data on Amazon conversions for 591 books with 18,682 customer reviews. To avoid confusing cause and effect, they collected data on other factors that could influence conversions, such as price, review volume, genre and advertising (which was found to be so insignificant in this case that it was disregarded).

Then they employed text analysis tools to evaluate two properties of the reviews:

- The positive or negative emotional tone of the review (more than 100 studies have demonstrated that this process results in outcomes similar to those of human raters)

- The linguistic style of the review. They did this by evaluating how similar each review was to other reviews for books in the same genre, based on how often certain words were used. Previous research has shown that when people share common jargon and slang, they develop a shared sense of identity and trust each other more, no matter how little they actually know about each other.

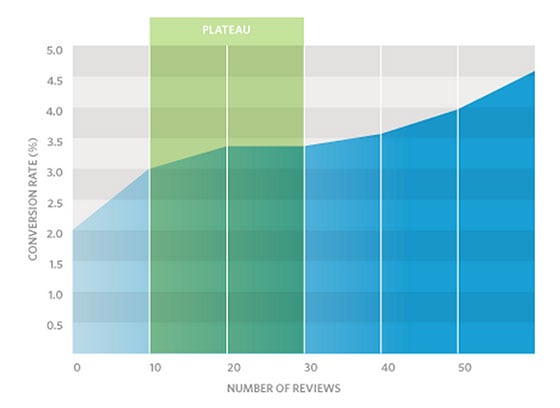

Not surprisingly, the researchers found that negative reviews had a stronger impact on sales than positive reviews did. But what’s interesting is that the sheer number of reviews, regardless of what they discussed or how favorable they were, had a positive impact on sales.

This effect has been backed up by Reevo (and many others):

The study also found that positive star ratings didn’t influence sales. Only the content of the reviews impacted conversions. This might be because everybody has a different definition of what counts as a 5 or 0 star product, and the review itself contains the information to make that judgment for yourself.

But here’s the thing…

While the star ratings themselves didn’t influence sales, the variability in star ratings positively influenced sales.

In other words, if a visitor sees nothing but 5 star reviews, they get suspicious.

Keep in mind that this is on Amazon, a trusted brand where the default assumption is that the customer reviews are real. This skepticism can only get worse if the user reviews are on a platform they’ve never seen before.

In short, while a plethora of negative reviews is going to sink your product, a collection of excessively happy customer reviews will have your visitors crying “Fake!”

There’s more to it than positive and negative

As we mentioned earlier, the study looked at not just the emotional tone of the reviews, but their linguistic style, which did, in fact, make a difference.

Again, this comes down to jargon and slang. Readers will probably find a sci-fi reviewer more trustworthy if he references “the uncanny valley” or “steampunk.” A self-help reviewer might be taken more seriously if they mention “affirmations” or “Stephen Covey.”

Younger readers will probably respond better to something that’s “epic,” as opposed to “cool.”

This is where things can start to get a bit more complicated. How do you measure “linguistic style” without advanced text analysis software? Does it even matter to a marketer?

Yes, it does. And there are a surprising number of ways that you can influence it:

- Including editorial reviews that match the desired linguistic style

- Calling out customer reviews that match the desired linguistic style

- Using the appropriate linguistic style within the product descriptions and landing page

- Including brief reviewer guidelines that can direct the reviewer’s writing style

Identifying the desired linguistic style to begin with is more difficult, and without text analysis tools it’s impossible to pull off quantitatively. Still, simply being aware of its existence should give you an incentive to test with:

- Heatmap and behavioral data – linked to specific users if possible – that indicates which reviews have the strongest impact on conversion rates

- Survey forms like Qualaroo, which could ask for feedback on the linguistic style, with questions like “How did you feel about the use of jargon on this page?”

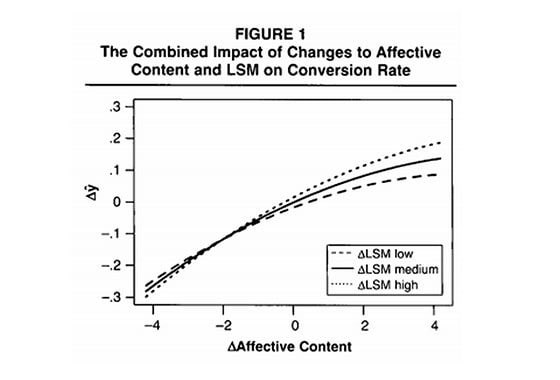

There’s one more major reason you should care about linguistic style. If a review is positive and has the appropriate linguistic style, the impact on conversions is even stronger:

This graph comes from the paper. The vertical axis represents the change in conversion rate. The horizontal axis represents the positive or negative emotional tone of the review, and LSM stands for “linguistic style matching.”

As you can see, the dotted line representing “LSM high” raises above the others as the positive tone of the message gets stronger.

In other words, the better a match the linguistic style, the more tolerable consumers are of excessively positive reviews.

Sorting reviews by most recent? Bad idea.

Most people aren’t going to read all of the reviews, just the first few of them. If the reviews are organized by something as arbitrary as how recent they are, you’re going to miss out on some serious conversion opportunities.

So, how should you organize the posts? Let’s start with what not to do:

Don’t sort by most positive rating. First off, remember that the star rating itself doesn’t actually influence sales; only the content of the review matters. Second, remember that a wider range of star values actually increases conversions, despite the fact that this means more negative reviews are visible.

Instead, you should consider testing these and other alternatives:

- Allow users to rate each review, and then sort them by most helpful. The study found that the most helpful reviews on Amazon were also the most likely to use the appropriate linguistic style.

- Show users a representative sample of the variability in star ratings. In other words, if 50 percent of the reviews have 5 stars, show them a list of 10 reviews, 5 of them with 5 star ratings.

- Experiment with showing the most positive reviews from each star rating. In other words, show the 1, 2, and 3 star ratings whose actual content reflects the product most positively.

- Experiment with showing the best linguistic style for each star rating.

- If you have the resources to code this solution, try presenting the reviews in random order, measuring how each influences conversion rates, and then sorting the reviews by strongest impact on conversions.

If I had to choose an option without running any tests, I’d probably run with some combination of most helpful and most representative.

Here’s what I mean: it’s possible that if you sorted exclusively by most helpful, you’d end up with too many positive reviews and users would become skeptical. Including some negative reviews to show a wider range of opinions may actually boost conversions.

But again, as always, you’ll never know without testing.