A/B testing landing pages is a bit like performing surgery. It requires patience and skill, and if you’re not sure why you’re performing the operation in the first place, you’re bound to botch it.

Because testing is a complex science, people have a lot of questions. So we set out to ask conversion rate optimization experts some of the most common ones.

This week, we spoke with our good friend Peep Laja, the man behind ConversionXL and former co-host of Page Fights. Peep will also be a member of our A/B testing super-panel at our Call to Action Conference in September, where you can ask him plenty more questions.

We asked Peep to explain how to know when an A/B test is finished. Read on to find out what he had to say.

When is an A/B test cooked?

When we test our landing pages, we’re looking for something that tells us which elements of our page are working and which are not. In order to get that answer, a test must achieve a certain confidence level.

Here’s a great explanation of what confidence level is from the Unbounce conversion glossary:

The probability that the winning variation of your A/B test had more conversions for reasons other than chance. Before declaring a winner, you want a confidence level of 95% or more, as well as a sufficient number of conversions.

But is that the only factor you should consider before declaring a winner? Here’s what Peep had to say:

When your testing tool says that you’ve reached 95% or even 99% confidence level, that doesn’t mean that you have a winning variation. Around 77% of the A/A tests (same page against same page) will reach significance at a certain point.

What does this mean? First of all, an explanation about A/A tests.

What is an A/A test?

An A/A test is when you pit one landing page against itself.

This is done to validate that you’ve set up your test correctly and that you’re getting roughly the same number of conversions for each variant. If the numbers are significantly different (even though the pages are identical), there may be an issue with your setup or with the tool you’re using.

More broadly speaking, this serves as a gentle reminder of the importance of taking your time before selecting a winner in any test. If one variation of an A/B test shows better results, it doesn’t immediately mean that it’s the winner. As Peep says:

The reality is that statistical significance is not a stopping rule. That alone should not determine whether you end a test or not.

So, Peep, seriously, when is an A/B test cooked?

Alas, there is no single truth out there, and there are a lot of “depends” factors. That being said, you can have some pretty good stopping rules that will get you to the right path in most cases.

Here are two stopping rules Peep recommends.

Reach your test duration

In order to accurately collect data, Peep says that you should test for at least two to four weeks.

By allowing for more time to pass, you’ll have more well-rounded data with which to analyze your test. That’ll help you account for any anomalies in your data.

Reach your pre-determined sample size

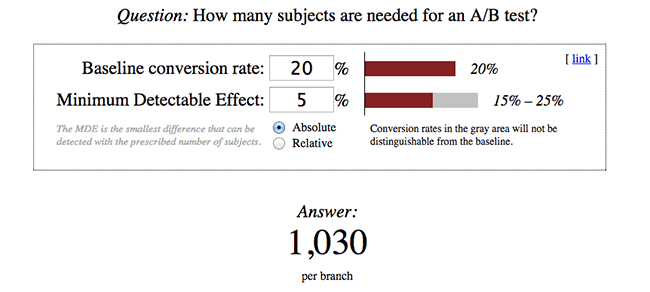

In order to reach a certain confidence level, you need to know how large a sample size you need. In other words, you have to establish how much traffic you’ll need in order to declare a test complete.

This tool made available by A/B testing expert Evan Miller will allow you to answer the question, “How many subjects are needed for this A/B test?”

Just punch in your numbers and it’ll spit out an appropriate sample size.

Once these two conditions above have been met, and only then, should you check to see if your confidence level is 95% or higher. If not, your test has not provided you sufficient data to make a decision.

Don’t stop ’til you get enough

All too often we see people make changes based on incomplete data.

They end up being disappointed by the results of making that snap decision. Their pages don’t convert better – they may even convert worse. All because not enough time was taken to really evaluate whether the data was ready for analysis.

By taking Peep’s advice, you’ll be able to make decisions with confidence, and start to see results that will turn your frown upside down!