It’s been said that A/B testing is like sex in high school – everyone talks about it, but no one is doing it.

Excuses range from lack of resources to not having enough traffic, but a lot of procrastination comes down to simply not knowing where to start. As inspiring as they are, run-of-the-mill blog posts like The Top 10 Things You Need to Be Testing NOW (#9 Will Shock You!) don’t exactly convert testing virgins into A/B testing addicts.

In our most recent Unwebinar, Hiten Shah of Crazy Egg and KISSmetrics explained that simply copying A/B testing ideas from others will get you nowhere:

“You can’t double your win rate just by copying ideas – you need to focus on building the right #process“. #unwebinar @unbounce

— Erin Brown (@ErinCBrown) November 25, 2014

Instead, you need a deliberate process in which you’re testing on a tight schedule, consistently improving conversions and continuously learning from your tests.

Hiten shared a four-step process that will not only help you formulate smarter hypotheses, but develop an intuition for future tests so you can generate bigger wins with less effort.

Sound good to you?

You can watch the full webinar recording here – or you can read on for an overview of his four-step process.

1. Find pages to test

Before you formulate your first hypothesis – before you even start thinking about your first A/B test – stop. Take a step back and identify which of your pages has the greatest potential for improvement.

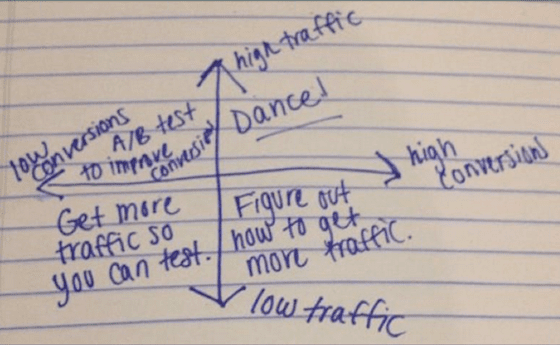

To do this, Hiten recommended diving into your analytics and identifying which of your landing pages have the highest volume of traffic but a low conversion rate.

Don’t have enough traffic?

All of that sounds pretty straightforward, but what if you don’t have very much traffic?

A small sample size doesn’t reveal much actionable insight. And that means you can’t get to work at improving your conversion rate, right?

Not quite.

Hiten explained that running A/B tests and watching for the highest number of conversions (quantitative data) isn’t the only way to increase your conversion rate. Collecting qualitative data can also help you move the needle.

Here’s what Hiten recommended:

- Engaging in qualitative research such as user testing. Services such as Peek allow you to get a free five-minute video of a person using your landing page. Watching an unbiased person engage with your landing page is an easy way to understand where the issues are and give you an idea of where people are getting confused.

- Or… find ways to get more traffic. This probably isn’t what you wanted to hear, but Hiten explained that finding ways to get more traffic is an important part of conversion rate optimization.

2. Create a hypothesis

You shouldn’t just pull your hypothesis out of thin air.

At their core, successful A/B tests have one thing in common: a solid hypothesis derived from hours of research.

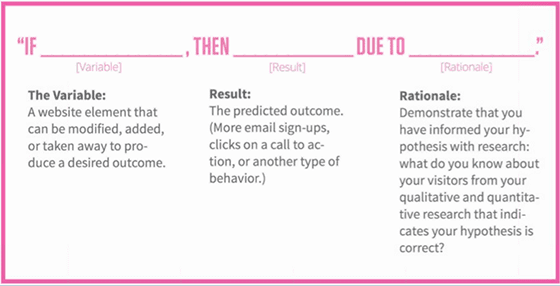

If that sounds daunting, don’t fret. Hiten shared a pretty straight-forward formula you can use to structure your next A/B testing hypothesis:

But how exactly do you flesh out the formula above? Hiten explained that taking the time to conduct preliminary research will help you formulate stronger hypotheses that generate bigger wins.

Here are some research methods he recommended:

- Ask prospects what’s preventing them from converting with services like Qualaroo

- Ask customers what persuaded them to purchase with surveys

- Use heat-mapping software such as CrazyEgg to get a visual representation of where people are clicking (without any impact on the user experience)

Collecting insights using those research methods will help you get a feel for what’s working and what’s not. From there, you can identify what needs to change and get to work on your first hypothesis.

Determine which test you should prioritize

On the webinar, Hiten shared a straightforward process for determining which tests you should prioritize. Coined by CRO expert Chris Goward, the PIE Framework helps you rate your hypotheses based on three criteria:

- Potential: How much improvement can be made?

- Importance: Are you sending PPC traffic to that landing page? Is it high-traffic?

- Ease: How easy will implementing the test be?

For each of the criteria, rank your hypothesis a score of 1-10. In the end, Hiten explained, you’re left with an objective number ranking that will help you determine what you should test first.

3. Start an experiment

Once you’ve done all the prep work, it’s time to don your mad scientist hat and blow some stuff up (ideally, your conversion rate).

But before you get over-excited and declare any one variation a winner, you need to…

Wait for statistical significance

You knew this was coming. If you’ve read any articles about A/B testing, then you understand that statistical significance is important. If you’ve heard that anything between 95%-99% is kosher, get this. The data scientists at KISSmetrics don’t settle for anything under 99%.

#unwebinar @hnshah “99% statistical significance!” Holy shit. Now I’m scurred.

— Brian Park (@tisbp) November 25, 2014

As Hiten explained, successful A/B tests require diligence and patience. If a test has good improvement early on, let it run as long as it needs to.

But be willing to cut your losses

If you have tests lined up and you don’t think your test will reach statistical significance, Hiten recommended cutting your losses:

If I see a test that’s having a marginal improvement and I don’t think it’ll hit significance, I’ll turn off the test and go back to the control.

In other words, if a variation just isn’t promising, pull the plug. Return to the control and move on to the next test.

4. Learn from the data

You’ve done your research, you’ve formulated a detailed hypothesis and it paid off. Your winning variation killed.

Nice one! Pat yourself on the back…. and then get back to work. As Hiten explained, your job isn’t done.

Write a post-mortem

For every A/B test you run, Hiten recommended writing a post-mortem.

Document everything – your hypothesis, all the data and a description of whether or not you saw positive results. Include your best guess as to why it worked (or didn’t).

For Hiten, having a summary of whether tests lost or won has multiple benefits:

- You’ll have a report of findings that you can share with your team so they can learn from your experiments too.

- Over time, you’ll have compiled a comprehensive catalogue of tests to refer to so you don’t repeat your A/B testing mistakes.

- The mental exercise will stimulate your critical thinking and help you improve your natural intuition about whether future tests will do well.

This last benefit – improving your natural intuition – touches on a really important point that Hiten made about conversion rate optimization.

‘CRO is a science but it’s also an art that takes intuition and gut.’ @hnshah on @unbounce‘s #unwebinar

— Sarah McCredie (@sarahailish) November 25, 2014

While looking at your A/B testing data is essential, Hiten explained that drawing from past experiences and trusting your intuition are just as important. CRO is a science, but it’s also an art.

Rinse, lather, repeat

The process that Hiten shared will help make your A/B testing more systematic.

If you haven’t yet started running A/B tests, you no longer have an excuse. The four-step process will help get you started – and it’ll motivate you to keep lining up your next great A/B test. In the words of Hiten:

If you’re not testing, you’re not learning. Always have a test running – no matter how small.

Before you know it, you’ll be running smarter tests that bring you bigger wins with less effort.

Over to you – do you have a structured A/B testing process? How does it differ from Hiten’s?

![]()