When I first encountered A/B testing, I immediately wanted to become the type of marketer who tested everything. The idea sounded fun to me. Like being a mad scientist running experiments to prove when my work was actually “working.”

Turns out though, there’s always a long list of other things to do first… blog posts to write, campaigns to launch, and don’t get me started on the meetings! I’m not alone in this, either. A lot of marketers are just too darned busy to follow up and optimize the stuff they’ve already shipped. According to HubSpot, only 17% of marketers use landing page A/B tests to improve conversion rates.

Sure, running a split test with one or two variants always sounds easy enough. But once you take a closer look at the process, you realize just how complex it can actually be. You need to make sure you have…

- The right duration and sample size.

- Taken into account any external factors or validity threats.

- Learned how to interpret the results correctly, too.

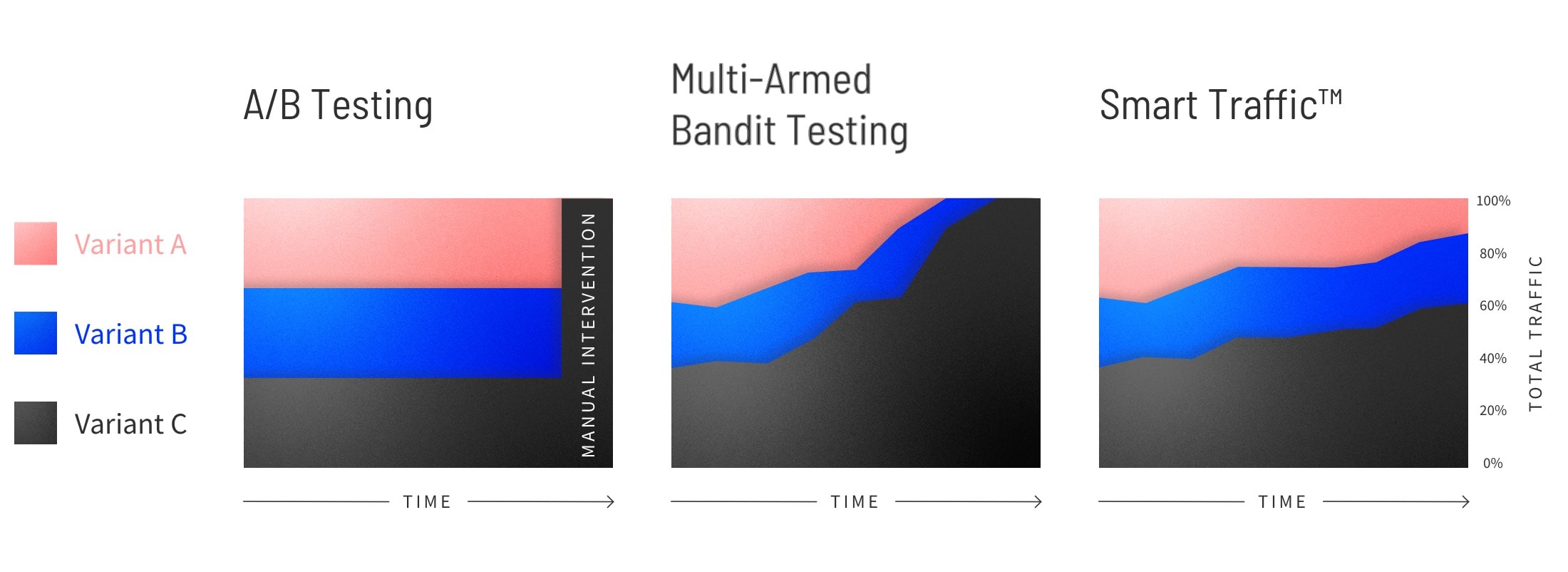

But—while there will always be a time and place for A/B testing—there’s also now an easier and faster way for marketers to optimize. Smart Traffic is a new Unbounce tool that uses the power of AI and machine learning to get you more conversions.

Every day, more marketers are using Smart Traffic to “automagically” optimize their landing pages. But whenever we launch anything new, we like to test it out for ourselves to learn alongside you (and keep you up to speed on what to try next).

Here’s what I learned after taking Smart Traffic for a test drive myself…

Shifting Your Mindset to Optimize with AI

I know many marketers are (perhaps) skeptical when it comes to promises of machine learning, artificial intelligence, or magical “easy” buttons that get them better results. But AI is all around us and it’s already changing the way we do marketing. Landing page optimization is just one more area of the job where you no longer need to do everything yourself manually.

Smart Traffic augments your marketing skills and automatically sends visitors to the landing page variant where they’re most likely to convert (based on how similar page visitors have converted before). It makes routing decisions faster than any human ever could (thank you, AI magic), and “learns” which page variant is a perfect match for each different visitor. This ultimately means no more “champion” variants. Instead, you’re free to create multiple different pages to appeal to different groups of visitors and run ‘em all at once.

This is very different from A/B testing and honestly—it can feel kinda weird at first. You’ve got to trust in the machine learning to figure out what works best and what doesn’t. Data scientists call this the “black box” problem: data goes in, decisions come out, but you never really get the full understanding of what happened in between.

For marketers using Smart Traffic, this means shifting your mindset and starting to think about optimization differently. Unlike A/B testing, you’re not looking for those “aha” moments to apply to your next campaign, or a one-size-fits-all “winning” variant. Instead, you’re looking to discover what works best for different subsets of your audience. This gives you unlimited creativity to try out new marketing ideas, makes it easier and less risky for you to optimize, and gives you an average conversion lift of 30% compared to splitting the traffic evenly across multiple variants. (Woah.)

My Experiment with Smart Traffic

I know all this because I recently experimented with variant creation myself to better understand this new AI optimization mindset. I created 15 variants across two separate landing pages using Smart Traffic to discover…

- How easy is it to optimize with an AI-powered optimization tool?

- Could I quickly set up the tests in Unbounce while still getting those other to-do’s done?

- What kind of conversion lift would I see from just a few hours invested?

Creating 15 Variants in Under Two Hours

The beauty of Smart Traffic is there are no limits to how many variants you can create and it automatically starts optimizing in as few as 50 visits. Just hit the “optimize” button and you’re off to the races. Could it really be that simple?

My guinea pigs for this experiment would be two recent campaigns our marketing team had worked on: the ecommerce lookbook and the SaaS optimization guide. The team had created both of these ebook download pages in Unbounce, but we hadn’t been able to return to them and optimize very much in the months since we published.

Before starting, I consulted with Anna Roginska, Growth Marketer at Unbounce, to get her input on how I should create my variants. She advised:

You can take the ‘spaghetti at the wall’ approach, where you create a bunch of variants and just leave them to Smart Traffic to see what happens. It’s that ‘set it and forget it’ mentality. That’s interesting, but when you look at a bowl of spaghetti… There’s a lot of noodles in there. You won’t necessarily get to explain why something is working or not working.

The other approach is to be more strategic and focused. I think there’s a huge benefit to going in with a plan. Create maybe only five variants and give them each a specific purpose. Then, you can see how they perform and create new iterations for different portions of the audience.

I had two landing pages to work with, so I thought I’d give both approaches a try. But with only a few hours scheduled in my calendar to complete all these variants, I needed to move fast.

The “Spaghetti at the Wall” Approach to Variant Creation

On the ecommerce lookbook page, I wanted to spend less time planning and more time creating. Whereas in A/B testing you need a proper test hypothesis and a careful plan for each variant, Smart Traffic lets you get creative and try out new ideas on the fly. Your variants don’t have to be perfect—they just need to be different enough to appeal to new audience segments.

This meant I didn’t have to make any hard or fast choices about which one element to “test” on the landing page. I could create 15 different variants that varied wildly from one another. Some used different colors, some had different headlines, some completely changed up the layout of the page.

This is something you just can’t do in a traditional A/B test where you’re looking to find a “winner” and understand why it “wins.” I had to remind myself I wasn’t looking for that one variant to rule them all (or for that one variant to bring them all and in the darkness bind them). I was looking to increase the chance of conversion for every single visitor. Certain pages were going to work better for certain audiences, and that was totally fine.

I wondered, though: how many variants would be too many? Would the machine learning recognize that some of these were not anything special and just stop sending traffic to them? And how long would it take to get results? With these questions in mind, I checked back on my first set of tests one month later…

Changing up the background color

Usually, color A/B tests are pretty much a waste of time. You need a lot of data to get accurate results, and most marketers don’t actually end up learning anything useful in the end. (Because color by itself means nothing, it always depends on the context of the page.)

That being said, we know there is some legitimate color theory and certain audience segments respond better to certain colors than others. So I thought it might be interesting to switch up the background on this landing page to see what would happen. And color me surprised—these variants are seeing some pretty dramatically different conversion rates:

- Pink background – 12.82%

- Green background – 21.43%

- White background – 21.74%

- Black background – 31.71%

One might start to speculate from these conversion rates that darker backgrounds perform better than the lighter backgrounds. But hold your horses, that’s thinking about this as an A/B test again. Here’s why Jordan Dawe, Senior Data Science Developer at Unbounce, says you should be cautious about drawing any conclusions from the conversion rates…

Smart Traffic is not sending visitors randomly—it’s trying to get the best traffic to the best variant. So in this case, it doesn’t mean that a black background will always convert higher than a pink background. There are likely portions of the audience going to each color that would be doing worse on others. Here’s what you can conclude: the color black is preferred by a portion of the traffic that converts highly.

It’s hard to shake that mindset of looking for a “winner” and trying to figure out “why” something is working. But I was starting to accept that different portions of the audience would always respond better to different variants—this was just the first time I’d been able to use AI to automatically serve up the best version.

Making big (and small) changes to the headline

For the next group of variants, I switched up the H1 in both small and big ways to see what effect that would have on the conversion rate. In some cases, this meant just swapping a single adjective (e.g., “jaw-dropping” for “drool-worthy”). In other cases, I went with a completely new line of copy altogether.

Here’s how the variants stacked up against each other:

- See 27 Sales-Ready Ecommerce Landing Pages in Our Ultimate Lookbook – 25.81%

- See 27 Stunning Ecommerce Landing Pages in Our Ultimate Lookbook – 25.93%

- Get Ready to See 27 Jaw-Dropping Ecommerce Landing Page Examples – 28.13%

- Get Serious Inspo for Supercharging Your Ecomm Sales – 35%

- See 27 Drool-Worthy Ecommerce Landing Pages in Our Ultimate Lookbook – 40%

Again, each variant yielded a different conversion rate. I wondered if I kept testing different variations of the headlines (including ones that didn’t include “landing page examples“) and found one that performed best, could I deactivate all the other headline variants and just go with the “best” one?

Here’s how Floss Taylor, Data Analyst at Unbounce, responded…

Smart Traffic doesn’t have champion variants. You don’t pick one at the end like you would in an A/B test. Although one variant may appear to be performing poorly, there could be a subset of traffic that it’s ideal for. You’re better off leaving it on long-term so it can work its magic.

Trying out different page layouts and hierarchies

The last set of variants I created messed with the actual structure and hierarchy of the page. I wanted to see if moving things around (or removing sections entirely) would influence the conversion rate. Here’s a sample of some of the experiments…

- Removing the Headline – 16.67%

- Adding a Double CTA – 21.95%

- Moving the Testimonial Up the Page – 27.27%

Nothing too surprising here. And because I had created so many variants, Smart Traffic was taking longer than usual in “Learning Mode” to start giving me a conversion lift. Here’s how Floss Taylor explains it…

Smart Traffic needs approximately 50 visitors to understand which traffic would perform well for each new variant. If you have 15 variants and ~100 visitors per month, you’re going to have a long learning period where Smart Traffic cannot make accurate recommendations. I’d suggest starting off with a lower number of variants, and only adding more once you have sufficient traffic.

The “Strategic Marketer” Approach

So throwing spaghetti at the wall turned out to be… messy. (New parents beware.) For the SaaS optimization guide page, I wanted to be a bit more strategic. And I actually had a leg up for this one, because Anna Roginska, Growth Marketer at Unbounce, had already started with a Smart Traffic experiment on this page four months ago.

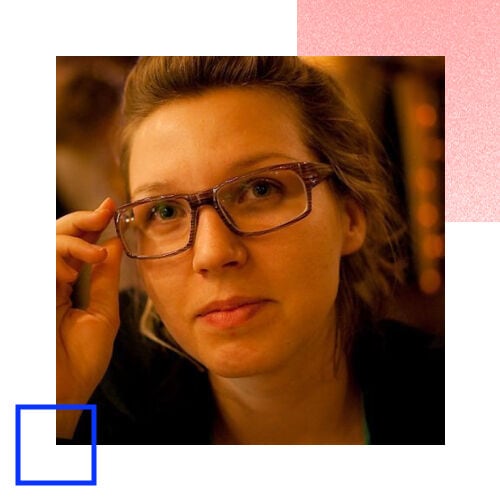

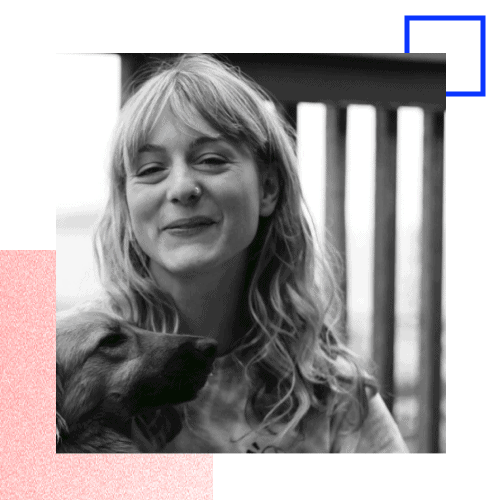

Anna had set up a test between two different variants. One had an image of the ecommerce lookbook as the hero graphic on the page, while the other used the image of conversion expert and author Talia Wolf. Anna says she decided on this second variant because of research she had seen on how photographs of people tend to convert better than products.

I put Talia up front because I knew from other tests I’ve run and research I’ve done. [Photographs of] people tend to convert better. I didn’t know if it would work better in this particular case, but I was able to set up a variant and use Smart Traffic to find out. And it just so happens that the algorithm started sending way more traffic to this variant.

Anna seemed to be onto something, too: her variant was converting at nearly double the rate for a large traffic subset. And while I now know we can’t consider this a “champion” variant like in an A/B test and learn from the results, we could iterate based on her design to target new audience segments.

I created a simple spreadsheet to develop my gameplan. The goal was to create five new versions of the page that would appeal to different visitors based on their attributes:

Reducing the word count to target mobile and “ready to download” visitors

For inspiration on my first variant, I consulted the 2020 Conversion Benchmark Report. The machine learning insights here suggested that SaaS landing pages with lower word counts and easier-to-read copy tend to perform better than their long-winded counterparts.

And while the original version of our download page was easy enough to read, it did have a long, wordy intro with a lot of extra detail. Could I increase our conversion rate for a portion of our audience if just focused on the bare essentials? I was ready to kill some darlings to find out…

- Original Long-Form Version – 10%

- Low Word Count Version – 21.43%

It seems there’s a segment of our traffic coming to this page who didn’t need to see all that extra info before they decided to fill out the form. I speculated that this variant might also perform better on mobile devices since it would be faster-loading and easier to scroll through. Interesting!

Switching the headline to target different audience segments

Next, I created an additional four page variants to speak to the different pain points and reasons our audience might want to download the guide. (Actually, this is something Talia herself recommends you do in the SaaS optimization guide.) I switched up the headline copy here, as well as some of the supporting text underneath to match. After a month, here’s what the conversion rates look like:

- Get Talia’s Guide to Optimize – 19.05%

- You Can’t Just Build – 23.08%

- Optimization is a Lot of Work – 24%

- Not Sure How to Optimize? – 33.33%

Each variant is serving a different segment of the audience, by speaking to the particular reason they want to download the guide most (e.g., maybe they don’t have the time to optimize, or maybe they don’t know how to get started). As Smart Traffic learns more about which variants perform best for which audience segments, we become that much more likely to score a conversion.

What I Learned Running These Smart Traffic Experiments

Smart Traffic absolutely makes optimization easier and faster for marketers who previously never had the time (or experience) to run A/B tests. It took me under two hours to set up and launch these experiments, and we’re already seeing some pretty impressive results just over a month later.

While the ecommerce lookbook page is still optimizing, the SaaS ebook page is showing a 12% lift in conversions compared to evenly splitting traffic among all these variants. And this is after only a month—the algorithm will keep improving to get us even better results over time. (Update: six months later, the lift in conversions has hit over 50%.)

At the same time, I did walk away with a few important lessons learned. If you’re planning to use Smart Traffic to optimize your landing pages, here are some things to keep in mind before you get started:

- There are no champion variants – Unlike traditional A/B testing, you won’t be able to point to one landing page variant at the end of your test and call it a winner. The machine learning algorithm automatically routes audiences differently based on their individual attributes, which means you have to be cautious when you’re analyzing the results.

- The more variants you create, the longer you’ll wait – While it can be tempting to throw spaghetti at the wall and create dozens of variants for your landing page, this means you’ll also have to wait longer to see what sticks. Try starting out with three to five variations and take a more strategic approach based on research in your industry. (The 2020 Conversion Benchmark Report is a great place to start for some ideas.)

- It’s (usually) better to leave low-converting variants active – Because Smart Traffic learns over time and continually improves, you’re typically better off leaving your variants active—even if their conversion rates aren’t all that impressive. The AI takes the risk out of optimization by automatically sending visitors to the page that suits them best. If you turn off variants, you may lose out on some of those conversions altogether.

It can be a lot of fun to get creative with the different page elements and try out new ideas. You just might want to come up with a bit of a plan first and be strategic with your approach. Still, it’s better to experiment and optimize with Smart Traffic (even if you make some mistakes along the way) than to never optimize at all.

(And in case you were worried, yep—I managed to get my to-do list done, too. 😅)

![[Optimize – MOFU] AI Optimization Product Page – V3 – 2024](https://unbounce.com/photos/smart-traffic-blog-visual-cta.jpg)